+

+

+

+

+

+

+

+  +

+

+

+

+

+

+

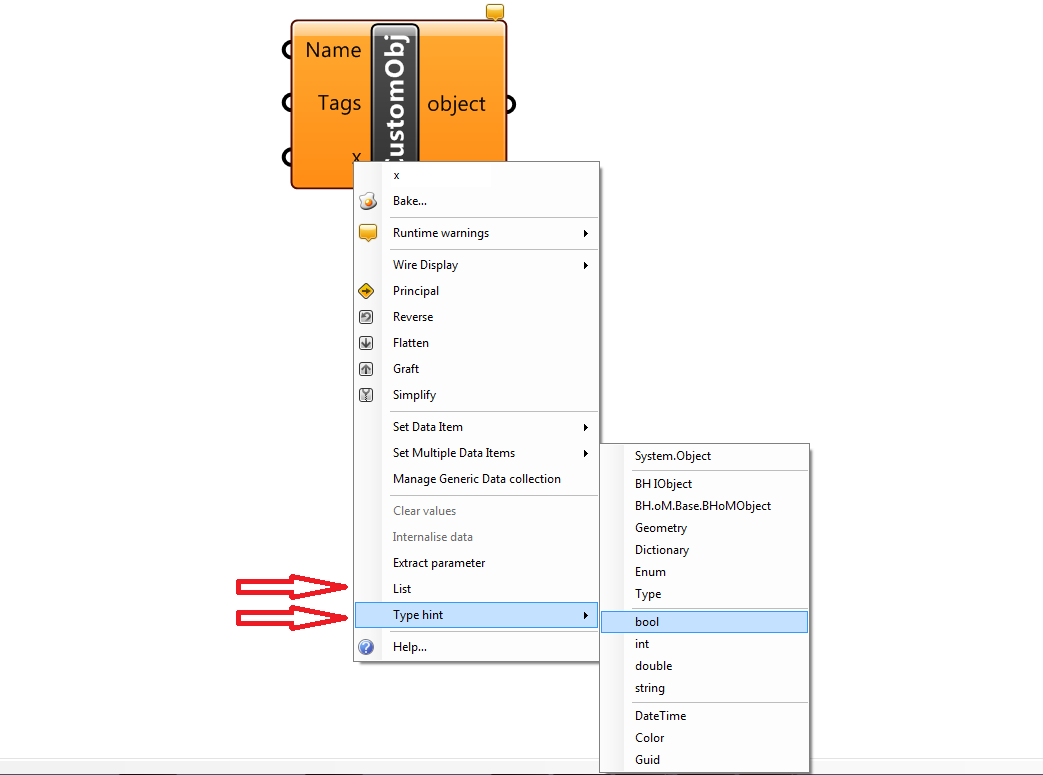

+ The Adapter Actions have some optional inputs that allow to refine their behaviour.

+Note

+This page can be seen as an optional Appendix to the pages Introduction to BHoM_Adapter and Adapter Actions.

+These optional inputs are:

+The ActionConfig is an object type used to specify any kind of Configuration that might be used by the Adapter Actions.

+This means that it can contain configurations that are specific to certain Actions (e.g. only to the Push, only to the Pull), and that a certain Push might be activated with a different Push ActionConfig than another one. This makes the ActionConfig different from the Adapter Settings (which are static global settings).

The base ActionConfig provides some configurations that are available to all Toolkits (you can find more info about those in the code itself).

+You can inherit from the base ActionConfig to specify your own in your Toolkit. For example, if you are in the SpeckleToolkit, you will be able to find: +- SpecklePushConfig: inherits from ActionConfig +- SpecklePullConfig: inherits from ActionConfig

+this allows some data to be specified when Pushing/Pulling.

+ActionConfig is an input to all Adapter methods, so you can reference configurations in any method you might want to override.

+ +Requests are an input to the Pull adapter Action.

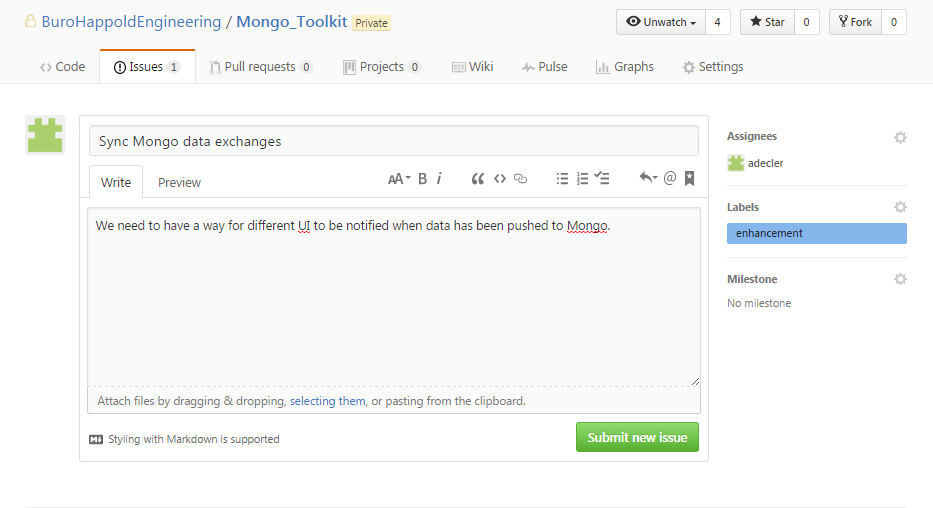

+They were formerly called Queries and are exactly that: Queries. You can specify a Request to do a variety of things that always involve Pulling data in from an external application or platform. For example: +- you can Request the results of an FE analysis from a connected FEM software, +- specify a GetRequest when using the HTTP_Toolkit to download some data from an online RESTFul Endpoint +- query a connected Database, for example when using Mongo_Toolkit.

+Requests can be defined in Toolkits to be working specifically with it.

+You can find some requests that are compatible with all Toolkits in the base BHoM object model. +An example of those is the FilterRequest.

+The FilterRequest is a common type of request that basically requests objects by some specified type. See FilterRequest.

In general, however, Requests can range from simple filters to define the object you want to be sent, to elaborated ones where you are asking the external tool to run a series of complex data manipulation and calculation before sending you the result.

+++ +Additional note: batch requests

+For the case of complex queries that need to be executed batched together without returning intermediate results, you can use a

+BatchRequest.Additional note: Mongo requests

+For those that use Mongo already, you might have noticed how much faster and convenient it is to let the database do the work for you instead doing that in Grasshopper. It also speeds up the data transfer in cases where the result is small in bytes but involves a lot of data to be calculated.

+

When objects are pushed, it is important to have a way to know which objects needs to be Updated, the new ones to be Created, and the old ones to be Deleted.

If the number of objects changes between pushes, you cannot rely on unique identifiers to match the objects one-to-one. The problem is especially clear when you are pushing less objects than the last push.

+Attaching a unique tag to all the objects being pushed as a group is a lightweight and flexible way to find those objects later.

+++For those using D3.js, this is similar to attaching a class to html elements. For those using Mongo or Flux, this is similar to the concept of key.

+

At the moment, each external software will likely require a different solution to attach the tags to the objects.

+If the software doesn't provide any solution to store the tag attached to the objects (e.g., like Groups), we could make use of another appropriate field to store the tag, for example the Name field that is quite commonly found.

+In case you need to use the Name field of the external object model, the format we are using for that is (example for an object with three tags): +

+For an in depth explanation on how tags are used and what you should be implementing for them to work, read the Push section of our Adapter Actions page; in particular, look at the practical example.

+ + +

+

+

+

+

+

+

+ After covering the basics in Introduction to BHoM_Adapter, this page explains the Adapter Actions more in detail, including their underlying mechanism.

+After reading this you should be all set to develop your own BHoM Toolkit! 🚀

+Note

+Before reading this page, make sure you have read the Introduction to BHoM_Adapter.

+As we saw before, the Adapter Actions are backed by what we call CRUD methods. Let's see what that means.

+A very common paradigm that describes all the possible action types is CRUD. This paradigm says that, regardless of the connection being made, the connector actions can be always categorised as: +* Create = add new entries +* Read = retrieve, search, or view existing entries +* Update = edit existing entries +* Delete = deactivate, or remove existing entries

+Some initial considerations:

+Read and Delete are quite self-explanatory; regardless of the context, they are usually quite straightforward to implement.

+Create and Update, on the other hand, can sometimes overlap, depending on the interface we have at our disposal (generally, the external software API can be limiting in that regard).

+Exposing directly these methods would make the User Experience quite complicated. Imagine having to split the various objects composing your model into the objects that need to be Created, the ones that needs to be Updated, and so on. Not nice.

We need something simpler from an UI perspective, while retaining the advantages of CRUD - namely, their limited scope makes them simple to implement.

+The answer is the Adapter Actions: they take care of calling the CRUD methods in the most appropriate way for both the user and the developer.

Let's consider for example the case where we are pushing BHoM objects from Grasshopper to an external software.

+The first time those objects are Pushed, we expect them to be Created in the external software.

+The following times, we expect the existing objects to be Updated with the parameters specified in the new ones.

++In detail: Why the "Actions-CRUD" paradigm?

+This paradigm allows us to extend the capabilities of the CRUD methods alone, while keeping the User Experience as simple as possible; it does so mainly through the Push. The Push, in fact, can take care for the user of doing

+CreateorUpdateorDeletewhen most appropriate – based on the objects that have beenReadfrom the external model.The rest of the Adapter Actions mostly have a 1:1 correspondence with the backing CRUD methods; for example, Pull calls

+Read, but its scope can be expanded to do something in addition to only Reading. This way,Readis "action-agnostic", and can be used from other Adapter Actions (most notably, the Push). You writeReadonce, and you can use it in two different actions!Side note: Why using five different Actions (Push, Pull, Move, Remove, Execute)...

+... and not something simpler, like "Export" and "Import"?

+

+... or just exposing the CRUD methods?

+The reason is that the methods available to the user need to cover all possible use cases, while being simple to use. +We could have limited the Adapter Actions to only Push and Pull – that does in fact correspond to Export and Import, and are the most commonly used – but that would have left out some of the functionality that you can obtain with the CRUD methods (for example, the Deletion).On the other hand, exposing directly the CRUD methods would not satisfy the criteria of simplicity of use for the User. +Imagine having to Read an external model, then having manually to divide the objects in the ones to be

+Updated, the ones to beDeleted, then separately callingCreatefor the new ones you just added... Not really simple! The Push takes care of that instead.Side note: Other advantages of the "Actions-CRUD" paradigm

+We've explained how this paradigm allows us to cover all possible use cases while being simple from an User perspective. In addition, it allows us to: +1) ensure consistency across the many, different implementations of the BHoM_Adapter in different Toolkits and contexts, therefore: +2) ensuring consistency from the User perspective (all UIs have the same Adapter Actions, backed by different CRUD methods) +3) maximise code scalability +4) Ease of development – learn once, implement everywhere

+

The paragraphs that follow down below are dedicated to explaining the relationship between the CRUD methods and the Adapter Actions.

+For first time developers, this is not essential – you just need to assume that the CRUD methods are called by the Adapter Actions when appropriate.

+You may now want to jump to our guide to build a BHoM Toolkit.

++You will read more about the CRUD methods and how you should implement them in their dedicated page that you should read after the BHoM_Toolkit page.

+

Otherwise, keep reading.

+We can now fully understand the Adapter Actions, complete of their relationships with their backing CRUD methods.

+The Push action is defined as follows: +

public virtual List<object> Push(IEnumerable<object> objects, string tag = "", PushType pushType = PushType.AdapterDefault, ActionConfig actionConfig = null)

+This method exports the objects using different combinations of the CRUD methods as appropriate, depending on the PushType input.

Let's see again how we described the Push mechanism in the previous page:

+++The Push takes the input

+objectsand: + - if they don't exist in the external model yet, they are created brand new; + - if they exist in the external model, they will be updated (edited); + - under some particular circumstances and for specific software, if some objects in the external software are deemed to be "old", the Push will delete those.

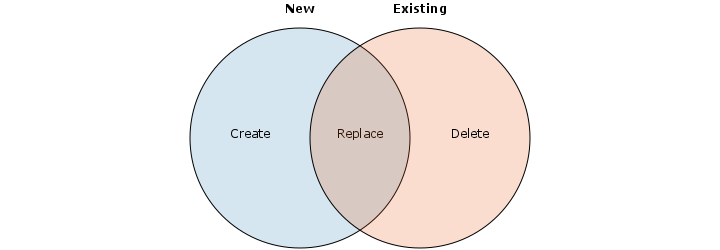

The determination of the object status (new, old or edited) is done through a "Venn Diagram" mechanism:

+

The Venn Diagram is a BHoM object class that can be created with any Comparer that you might have for the objects. It compares the objects with the given rule (the Comparer) and returns the objects belonging to one of two groups, and the intersection (objects belonging to both groups).

During the Push, the two sets of objects being compared are the objects currently being pushed, or objectsToPush, and the ones that have been read from the external model, or existingObjects.

This is the reason why the first CRUD method that the Push will attempt to invoke is Read. The Push is an export, but you need to check what objects exist in the external model first if you want do decide what and how to export.

++Additional note: custom Comparers

+Once the

+existingObjectsare at hand, it's easy to compare them with theobjectsToPushthrough the Venn Diagram. Even if no specific comparer for the object has been written, the base C# IEqualityComparer will suffice to tell the two apart. If you want to have some specific way of comparing two objects (for example, if you think that two overlapping columns should be deemed the same no matter what theirNameproperty is), then you should define specific comparer for that type. You can see how to do that in the next page dedicated to the BHoM_Toolkit.

Now, let's think that we are pushing two columns: column A_new and column B_new; and that the external model has already two columns somewhere, column B_old and column C_old. B_new and B_old are located in the same position in the model space, they have all the same properties except the Name property.

We activate the Push.

+First, the external model is read. The existingObjects list now includes the two existing columns B_old and C_old.

Then a VennDiagram is invoked to compare the existingObjects with the objectsToPush (which are the two pushed columns A_new and B_new).

There is no existing object in the external model that corresponds to one of the columns being pushed. Easy peasy: Push will call Create this column for this category of objects. A_new is Created.

What does "deemed the same" means?

+It means that the Comparer has evaluated them to be the same. This does not exclude that there might be some property of the objects that the Comparer is deliberately skipping to compare.

For example, we might have a Comparer that says:

+++two overlapping columns should be deemed the same no matter what their

+Nameproperty is.

If so, columns B_new and B_old are deemed the same.

But then, we need to update the Name property of the column in the external model, with the most up-to-date Name from the object being pushed.

+Hence, we call Update for this category of objects.

+B_new is passed to the Update method.

What to do with this category of objects? What to do with C_old?

An easy answer would be "let's Delete 'em!", probably. However, if we simply did that, then we would force the user to always input, in the objectsToPush, also all the objects that do not need to be Deleted.

Which is what we ask the user to do anyway, but to a lesser scale. +Our approach is not to do anything to these objects, unless tags have been used.

+We assume that if the User wants the Delete method to be called for this category of objects, then the existing objects must have been pushed with a tag attached. If the tag of the objects being Pushed is the same of the existing objects, we deem those objects to be effectively old, calling Delete for them.

Let's imagine that our column C_old was originally pushed with the attached tag "basementColumns".

+If I'm currently pushing columns with the same tag "basementColumns", it means that I'm pushing the whole set of columns that should exist in the basement. Therefore, C_old is Deleted.

Let's say that I push a set of columns with the tag "basementColumns". Everything that those bars need to be fully defined – what we call the Dependant Objects, e.g. the bar end nodes (points), the bar section property, the bar material, etc. – will be pushed together with it, and with the same tag attached.

+Let's then say I then push another set of bars corresponding to an adjacent part of the building with the tag "groundFloorColumns".

+It could be that a column with the tag "basementColumns" has an endpoint that overlaps with the endpoint of another column tagged "groundFloorColumns". That endpoint is going to have two tags: basementColumns groundFloorColumns.

The overlapping elements will end up with two tags on them: "basementColumns" and "groundFloorColumns".

+Later, I do another push of columns tagged with the tag groundFloorColumns.

+Some objects come up as existing only in the external model and not among those being pushed.

+Since a tag is being used and checks out, I should be deleting all these objects.

+However, the overlapping endpoint should not be deleted; simply, groundFloorColumns should be removed from its tags.

We then call the IUpdateTags method for these objects (no call to Delete).

+That is a method that should be implemented in the Toolkit and whose only scope is to update the tags. Its implementation is left to the developer, but some examples can be seen in some of the existing adapters (GSA_Adapter).

This diagram summarises what we've been saying so far for the Push.

+

Since an image is worth a thousand words, we provide a complete flow diagram of the Push below. If you click on the image you can download it.

+This is really an advanced read that you might need only if you want to get into the nitty-gritty of the Push mechanism.

+ +The Pull action is defined as follows: +

public virtual IEnumerable<object> Pull(IRequest request, PullType pullType = PullType.AdapterDefault, ActionConfig actionConfig = null)

+This Action has a more 1:1 correspondence with the backing CRUD method: it is essentially a simple call to Read that grabs all the objects corresponding to the specified IRequest (which is, essentially, simply a query).

+There is some additional logic related to technicalities, for instance how we deal with different IRequests and different object types (IBHoMObject vs IObjects vs IResults, etc).

You can find more info on Requests in their related section of the Adapter Actions - Advanced parameters wiki page.

+Note that the method returns a list of object, because the pulled objects must not necessarily be limited to BHoM objects (you can import any other class/type, also from different Object Models).

Move performs a Pull and then a Push.

+

It's greatly helpful in converting a model from a software to another without having to load all the data in the UI (i.e., doing separately a Pull and then a Push), which would prove too computationally heavy for larger models.

+

The Remove action is defined as follows: +

+This method simply calls Delete.

+

You might find some Toolkits that, prior to calling Delete, add some logic to the Action, for example to deal with a particular input Request.

+The method returns the number of elements that have been removed from the external model.

+The Execute is defined as follows:

+public virtual Output<List<object>, bool> Execute(IExecuteCommand command, ActionConfig actionConfig = null)

+The Execute method provides a way to ask your software to do things that are not covered by the other methods. A few possible cases are asking the tool to run some calculations, print a report, save,... A dictionary of parameters is also provided if needed. In the case of print for example, it might be the folder where the file needs to be saved and the name given to the file.

+The method returns true if the command was executed successfully.

+Read on our guide to build a BHoM Toolkit.

+ + +

+

+

+

+

+

+

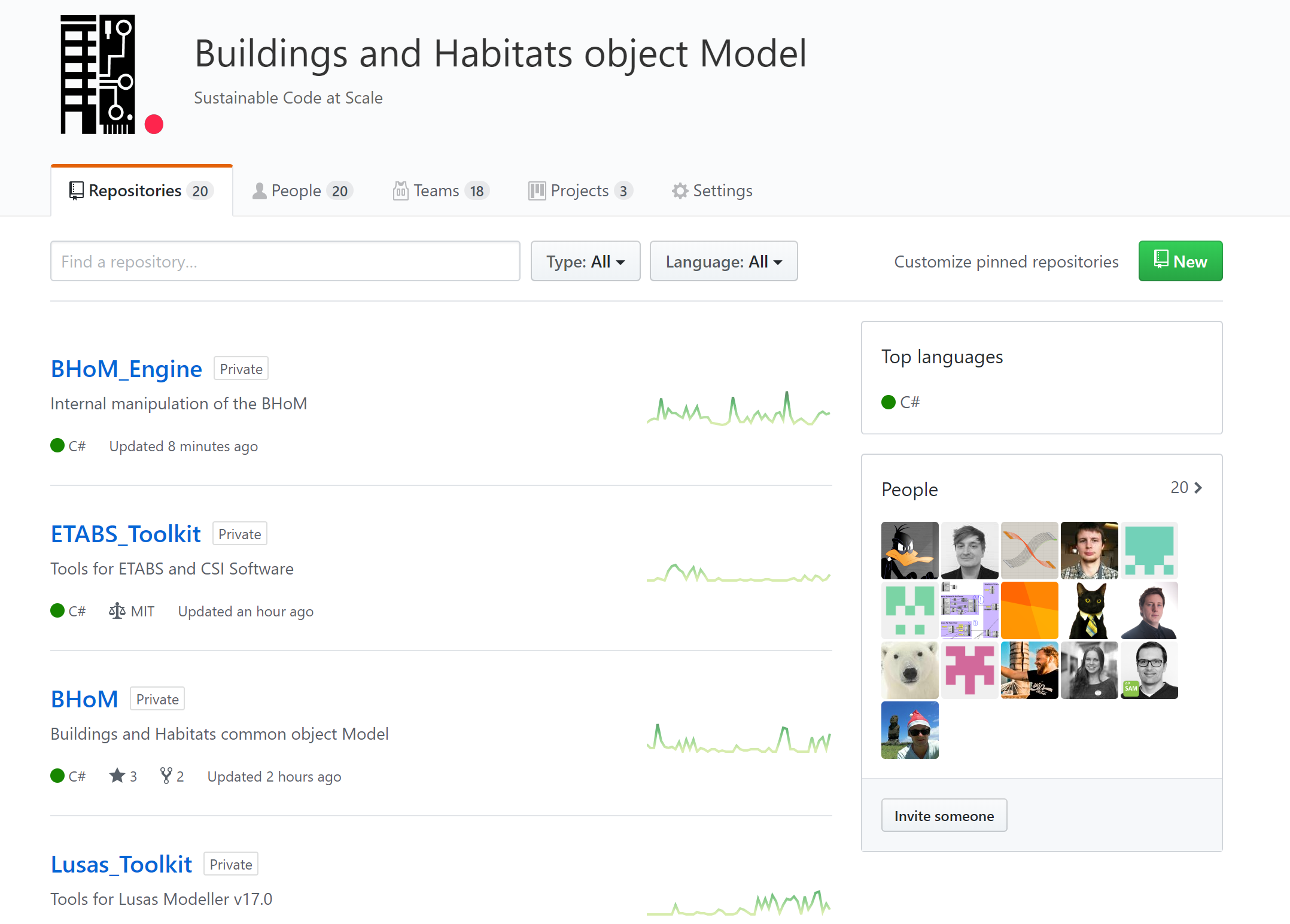

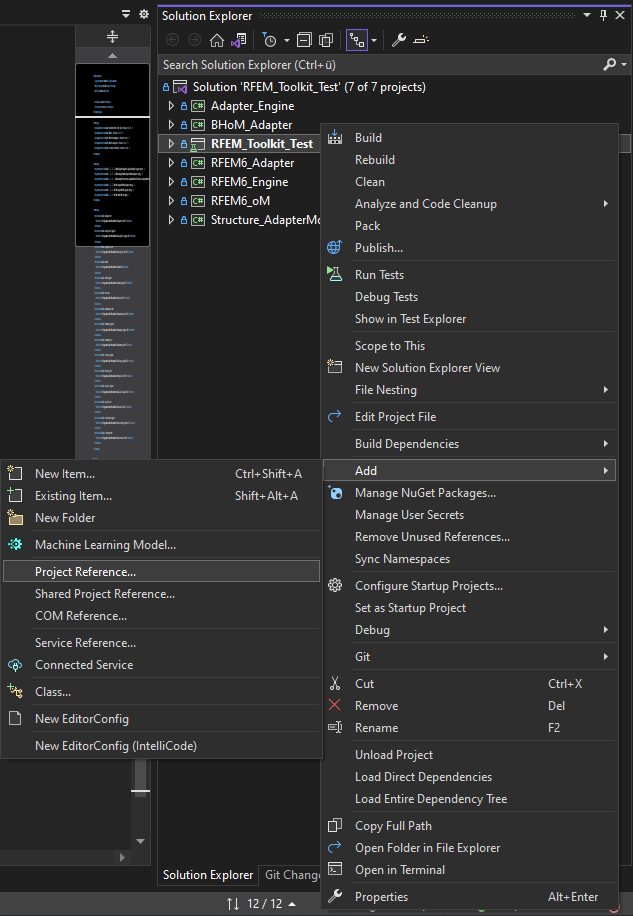

+ An adapter can be implemented in order to add conversion features from BHoM to another software, and vice versa.

+An adapter should be added to a dedicated Toolkit repository. See the page dedicated to the The BHoM Toolkit to learn how to set up a Toolkit, which can then contain an Adapter.

+Warning

+Before reading this page, please check out:

+ +The main Adapter file sits in the root of the Adapter project and must have a name in the format SoftwareNameAdapter.cs.

The content of this file should be limited to the following items:

+- The constructor of the Adapter. You should always have only one constructor for your Adapter.

+You may add input parameters to the constructor: these will appear in any UI when an user tries to create it.

+The constructor should define some or all of the Adapter properties:

+ - the Adapter Settings;

+ - the Adapter Dependency Types;

+ - the Adapter Comparers;

+ - the AdapterIdName;

+ - any other protected/private property as needed.

+- A few protected/private fields (methods or variables) that you might need share between all the Adapter files (given that the Adapter is a partial class, so you may share variables across different files). Please limit this to the essential.

If you want, you can override one or more of the Adapter Actions. This can be useful for quick development.

+All Action methods are defined as virtual, so you can override them.

In order to reuse the existing logic embedded in the Adapter Actions, you should not override them. This requires the implementation of CRUD methods which will be called by the Actions. Continue reading to learn more.

+The Adapter settings are general settings that can be used by the Adapter Actions and/or the CRUD methods.

+You can define them as you want; just consider that the settings are supposed to stay the same across any instance of the same adapter, i.e. the Adapter Settings are global static settings valid for all instances of your Toolkit Adapter. In other words, these settings are independent of what Action your Toolkit is doing (unlike the ActionConfig). If you want to create settings that affect a specific action, implement an ActionConfig instead.

The base BHoM_Adapter code gives you extensive explanation/descriptions/comments about the Adapter Settings.

+The CRUD folder should contain all the needed CRUD methods.

+You can see the CRUD methods implementation details in their dedicated page.

+Here we will cover a convention that we use in the code organisation: the CRUD "interface methods".

+In the template, you can see how for all CRUD method there is an interface method called ICreate, IRead, etc.

These interface methods are the ones called by the adapter. You can then create as many CRUD methods as you want, even one per each object type that you need to create. The interface method is the one that will be called as appropriate by the Adapter Actions. From there, you can dispatch to the other CRUD methods of the same type that you might have created.

+For example, in GSA_Toolkit you can find something similar to this: +

protected override bool ICreate<T>(IEnumerable<T> objects, ActionConfig actionConfig = null)

+ {

+ return CreateObject((obj as dynamic));

+ }

+The the statement CreateObject((obj as dynamic)) does what is called dynamic dispatching. It calls automatically other Create methods (called CreateObject - all overloading each other) that take different object types as input.

The mapping from the Adapter Actions to the CRUD methods does need some help from the developer of the Toolkit.

+This is generally done through additional methods and properties that need to be implemented or populated by the developer.

+This is an important concept:

+++BHoM does not define a relationship chain between most Object Types.

+

This is because our Object Model aims to be as abstract and context-free as possible, so it can be applied to all possible cases.

+If we were to define a relationship between all types, things would be more complicated than they already are. A typical scenario is the following. +Some FE analysis software define Loads (e.g. weight) as independent properties, that can be Created first and then applied to some objects (for example, to a beam). +Others require you to first define the object owning the Load (e.g. a beam), and then define the Load to be applied to it (the weight).

+We can't have a generalised relationship between the beams and the loads, because not all external software packages agree on that. We should pick one. So instead, we pick none.

+++Note: optional feature

+You can also avoid creating a relationship chain at all - if you are fine with exporting a flat collection of objects. You can activate/deactivate this Adapter feature by configure the Setting:

+m_AdapterSettings.HandleDependenciesto true or false. If you enable this, you must implementDependencyTypesas explained below.

We solve this situation by defining the DependencyTypes property:

+

The Toolkit developer should populate this accordingly to the inter-relationships that the BHoMObject hold in the perspective of the external software.

+The Dictionary key is the Type for which you want to define the Dependencies; the value is a List of Types that are the dependencies.

+An example from GSA_Toolkit: +

DependencyTypes = new Dictionary<Type, List<Type>>

+{

+ {typeof(BH.oM.Structure.Loads.Load<Node>), new List<Type> { typeof(Node) } },

+ ...

+}

+The comparison between objects is needed in many scenarios, most notably in the Push, when you need to tell an old object from a new one.

+In the same way that the BHoM Object model cannot define all possible relationships between the object types, it is also not possible to collect all possible ways of comparing the object with each other. Some software might want to compare two objects in a way, some in another.

+++Note: optional feature

+You can also avoid creating a default comparers - if you are fine for the BHoM to use the default C# IEqualityComparer.

+

By default, if no specific Comparer is defined in the Toolkit, the Adapter uses the IEqualityComparers to compare the objects.

+There are also some specific comparers for a few object types, most notably: +* Node comparer - by proximity +* BHoMObject name comparer

+However you may choose to specify different comparers for your Toolkit. You must specify them in the Adapter Constructor.

+An example from GSA_Toolkit: +

AdapterComparers = new Dictionary<Type, object>

+ {

+ {typeof(Bar), new BH.Engine.Structure.BarEndNodesDistanceComparer(3) },

+ ...

+ };

+ +

+

+

+

+

+

+

+ This page gives examples and outlines the general common behaviour of the adapters communicating with structural engineering software.

+To get an general introduction to how the adapters are working, and how to implement a new one please see the set of wiki pages starting from Introduction to the BHoM Adapter.

+For information regarding software specific adapter features, known issues and object relation tables, please see their toolkit wikis:

+ +Please see the samples for examples of how to push elements to a software using the adapters.

+The objects assigned to the loads need to have been in the software. The reason for this is that the objects need to have been tagged with a CustomData representing their identifier in the software. To achieve this you can

+Please see the samples for examples of how to push elements to a software using the adapters.

+Examples to be inserted

+ + +

+

+

+

+

+

+

+ ◀️ Previous read: The BHoM Toolkit and Adapter Actions

+++Note

+This page can be seen as an Appendix to the pages Adapter Actions and The BHoM Toolkit.

+

As we have seen, the CRUD methods are the support methods for the Adapter Actions. They are the methods that have to be implemented in the specific Toolkits and that differentiate one Toolkit from another.

+Their scope has to be well defined, as explained below.

+Note that the Base Adapter is constellated with comments (example) that can greatly help you out.

+Also the BHoM_Toolkit Visual Studio template contains lots of comments that can help you.

+Create must take care only of Creating, or exporting, the objects. +Anything else is out of its scope.

+For example, a logic that takes care of checking whether some object already exists in the External model – and, based on that, decides whether to export or not – cannot sit in the Create method, but has rather to be included in the Push. +This very case (checking existing object) is already covered by the Push logic.

+The main point is: keep the Create simple. It will be called when appropriate by the Push.

+The Create method scope should in general be limited to this: +- calling some conversion from BHoM to the object model of the specific software and a +- Use the external software API to export the objects.

+If no API calls are necessary to convert the objects, the best practice is to do this conversion in a ToSoftwareName file that extends the public static class Convert. See the GSA_Toolkit for an example of this.

If API calls are required for the conversion, it's best to include the conversion process directly in the Create method. See Robot_Toolkit for an example of this.

+In the Toolkit template, you will find some methods to get you started for creating BH.oM.Structure.Element.Bar objects.

This is a method for returning a free index that can be used in the creation process.

+Important method to implement to get pushing of dependant properties working correctly. Some more info given in the Toolkit template.

+The read method is responsible for reading the external model and returning all objects that respect some rule (or, simply, all of them).

+There are many available overloads for the Read. You should assume that any of them can be called "when appropriate" by the Push and Pull adapter actions.

+The Read method scope should in general be specular to the Create: +- Use the external software API to import the objects. +- Call some conversion from the object model of the specific software to the BHoM object model.

+Like for the Create, if no API calls are necessary to convert the objects, the best practice is to do this conversion in a FromSoftwareName file that extends the public static class Convert. See the GSA_Toolkit for an example of this.

Otherwise, if API calls are required for the conversion, it's best to include the conversion process directly in the Read method. See Robot_Toolkit for an example of this.

+The Update has to take care of copying properties from from a new version of an object (typically, the one currently being Pushed) to an old version of an object (typically, the one that has been Read from the external model).

+The update will be called when appropriate by the Push.

+If you have implemented your custom object Comparers and Dependency objects, then the CRUD method Update will be called for any objects deemed to already exist in the model.

Unlike the Create, Delete and Read, this method already exposes a simple implementation in the base Adapter, which may be enough for your purposes: it calls Delete and then Create.

+This is not exactly what Update should be – it should really be an "edit" without deletion, actually – but this base implementation can be useful in the first stages of a Toolkit development.

This base implementation can always be overridden at the Toolkit level for a more appropriate one instead.

+The Update has to take care of deleting an object from an external model. +The Delete is called by these Adapter Actions: the Remove and the Push. See the Adapter Actions page for more info.

+By default, an object with multiple tags on it will not be deleted; it only will get that tag removed from itself.

+This guaranties that elements created by other people/teams will not be damaged by your delete.

+ + +

+

+

+

+

+

+

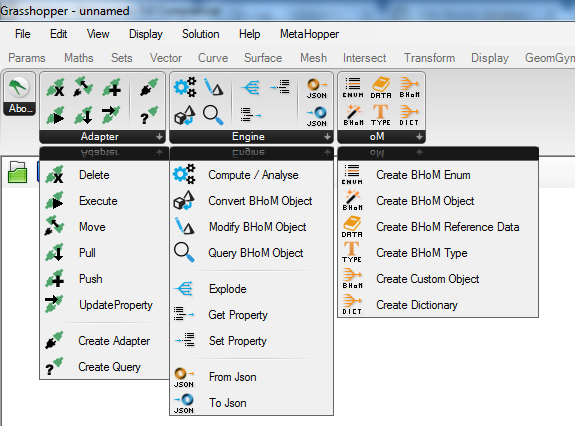

+ In this page you will find a first overview about what is the BHoM Adapter.

+Note

+▶️ Part of a series of pages. Next read: The Adapter Actions.

+Before reading this page, have a look at the following pages:

+ +and make sure you have a general understanding of:

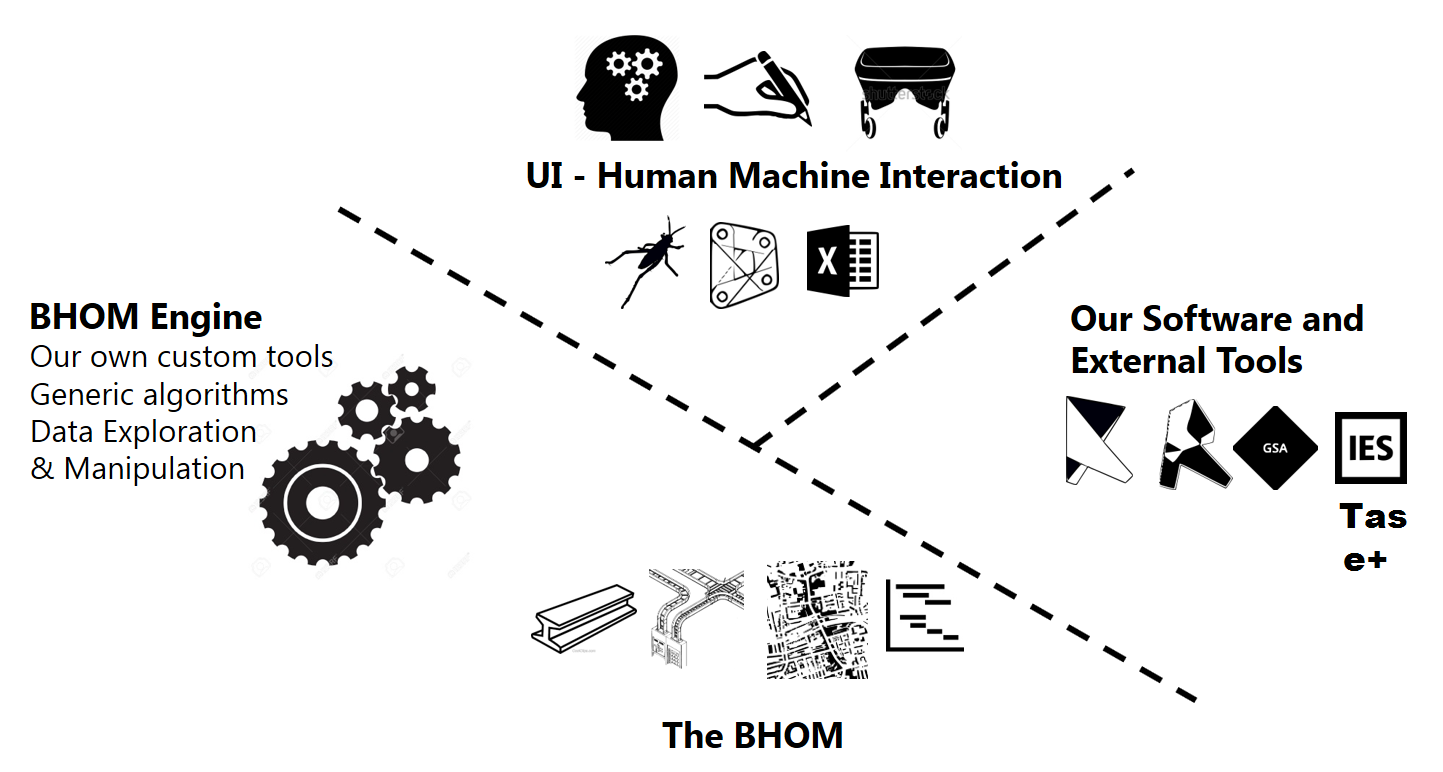

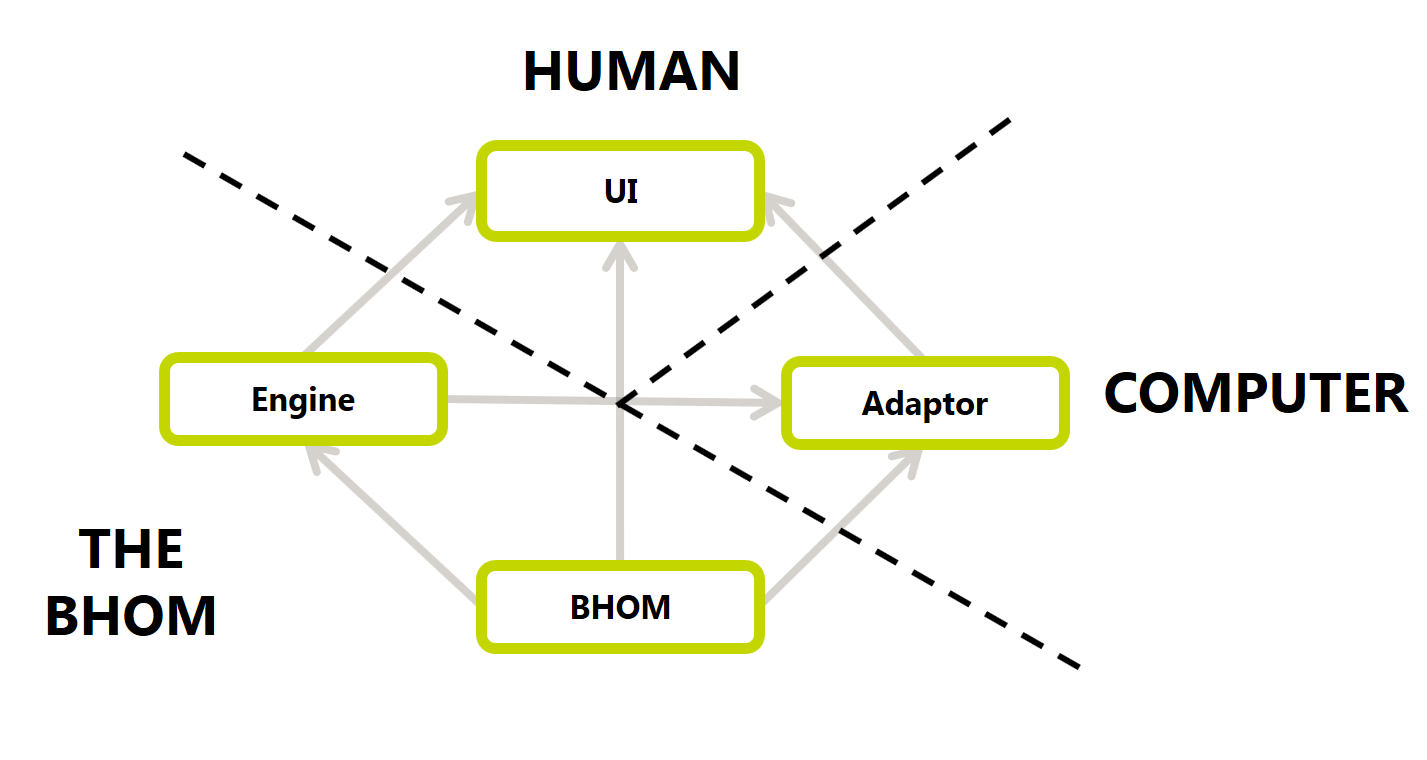

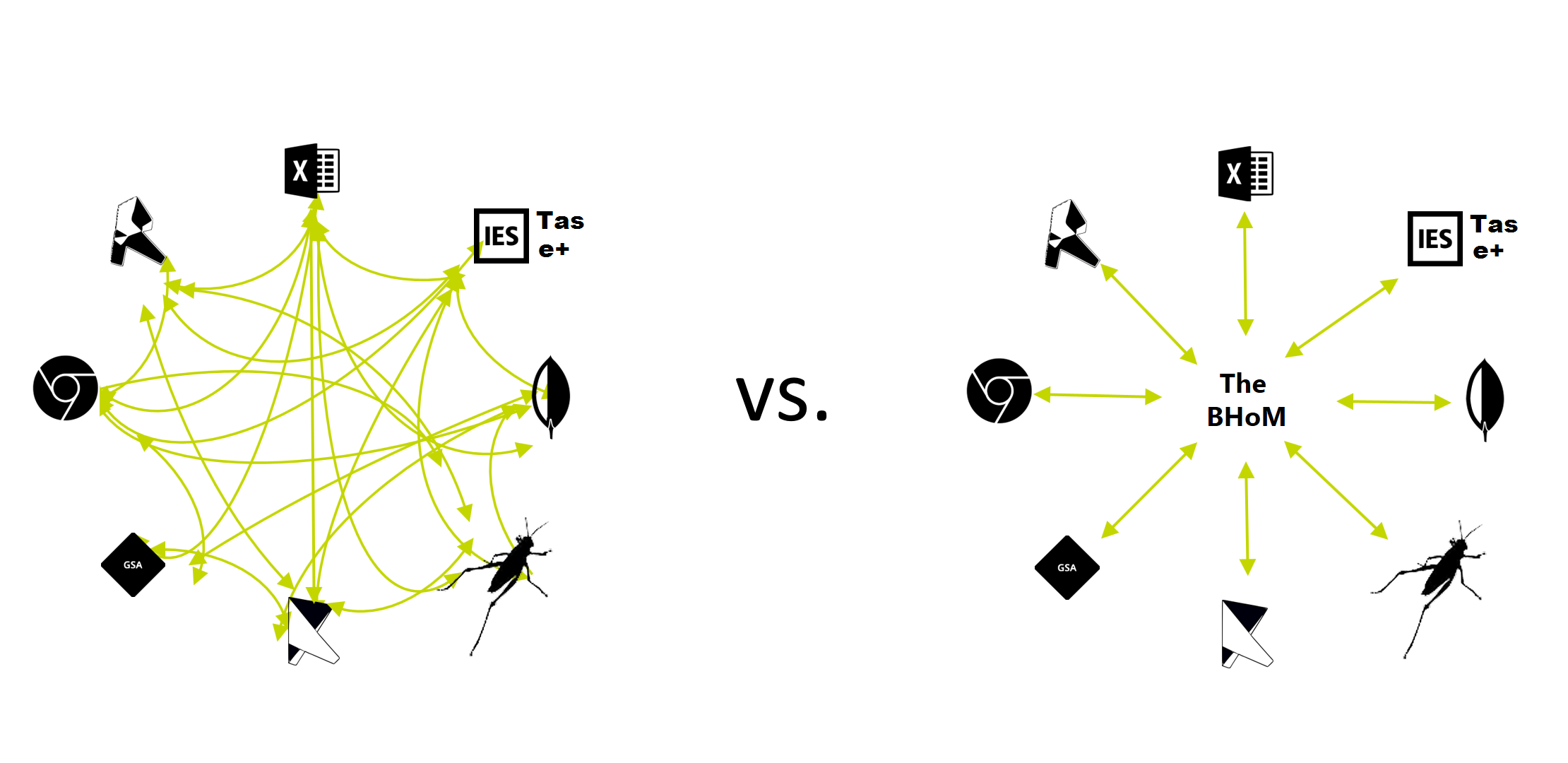

+As shown in the Structure of the BHoM framework, an adapter is the part of BHoM responsible to convert and send-receive data (import/export) with external software (e.g. Robot, Revit, etc.).

+In brief:

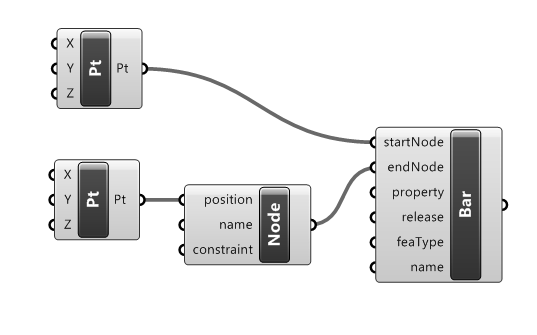

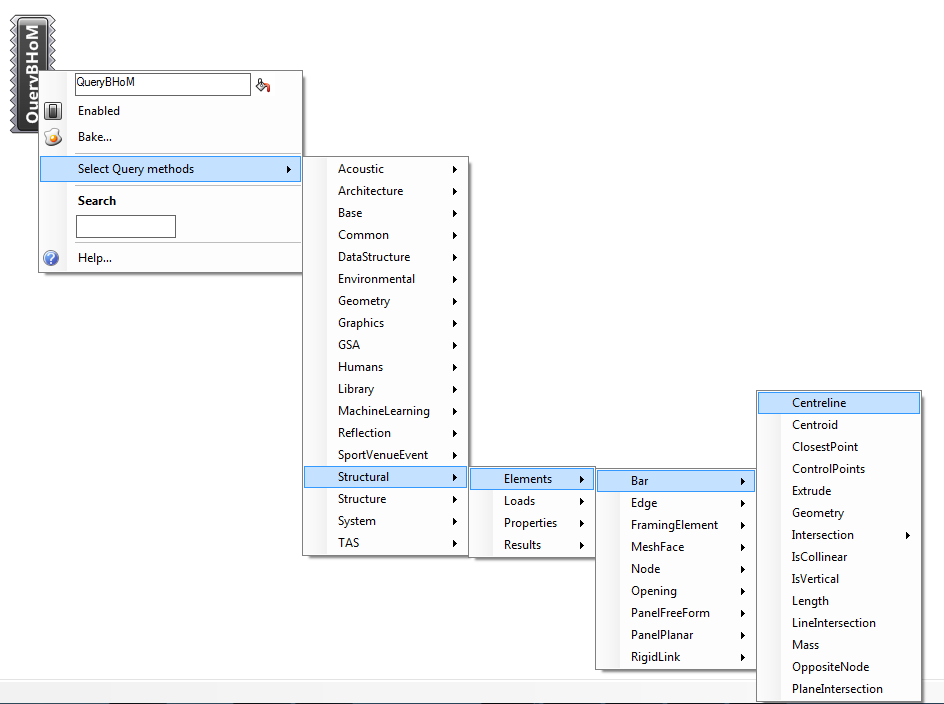

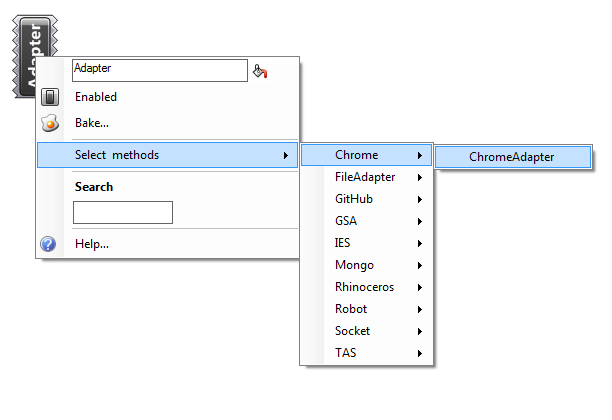

+Push, which means exporting from BHoM, and Pull, which means importing to BHoM).Depending on the UI Software you are using, you can create an Adapter component (in Grasshopper, or formula if you are in Excel) like this:

+Adapter component

+

The Adapter Actions are the way to communicate with an external software via an Adapter.

+Adapter Actions are BHoM components that you connect to a specific Adapter (e.g. Robot Adapter). Like any other BHoM component, are always look the same no matter what User Interface program you are using (Grasshopper, Excel, Dynamo...). In Grasshopper, there will be a component representing each action; in Dynamo, a node for each of them; in Excel, a formula will let you use them. You can find the Adapter Actions in the Adapter subcategory:

+Adapter Actions

+Select an Actions from the "Adapter" category, e.g. Push:

+

The selected action is instantiated as a component to which an adapter can be connected. You will need to specify also the objects and possibly other inputs; keep reading.

+

Select an Actions from the "Adapter" category, e.g. Push:

+

The selected action is instantiated as a formula to which an adapter can be connected. You will need to specify also the objects and possibly other inputs; keep reading.

+

Push (export) a BHoM model to RobotBefore looking at the Adapter Actions in more detail, see the following illustrative example of a Push to Robot.

+Note

+Although the Adapter actions always look the same, remember that each adapter may behave differently. Some adapters expect that you will use the Push with specific BHoM objects. For example, you can not push Architectural Rooms objects (BH.oM.Architecture.Room) to a Structural Adapter like RobotAdapter.

Illustrative example of Push

+Example file download: Example push GH.zip

+

Example file download: Example push Excel.zip

+

The following is a brief overview, more than enough for any user.

+A more in-detail explanation, for developers and/or curious users, is left in the next page of this wiki.

The first thing to understand is that the Adapter Actions do different things depending on the software they are targeting.

+In fact, the first input to any Adapter Action is always an Adapter, which targets a specific external software or platform. The first input Adapter is common to all Actions.

The last input to any Adapter action is an active Boolean, that can be True or False. If you insert the value True, the Action will be activated and it will do its thing. False, and it will sit comfortably not doing anything.

The most commonly used actions are the Push and the Pull. You can think of Push and Pull as Export and Import: they are your "portal" towards external software.

+Again, taking Grasshopper UI as an example, they look like this (but they always have the same inputs and outputs, even if you are using Excel or Dynamo):

+

The Push takes the input objects and:

+ - if they don't exist in the external model yet, they are created brand new;

+ - if they exist in the external model, they will be updated (edited);

+ - under some particular circumstances and for specific software, if some objects in the external software are deemed to be "old", the Push will delete those.

This method functionality varies widely depending on the software we are targeting. For example, it could do a thing as simple as simply writing a text representation of the input objects (like in the case of the File_Adapter) to taking care of object deletion and update (GSA_Adapter).

+In the most complete case, the Push takes care of many different things when activated: ID assignment, avoiding object duplication, distinguishing which object needs updating versus to be exported brand new, etc.

+The Pull simply grabs all the objects in the external model that satisfy the specified request (which simply is a query).

If no request is specified, depending on the attached adapter, it might be that all the objects of the connected model will be input, or simply nothing will be pulled. You can read more about the requests in the Adapter Actions - advanced parameters section.

Now, let's see the remaining "more advanced" Adapter Actions.

+Slightly more advanced Actions. Again taking Grasshopper as our UI of choice, they look like this:

+

Let's see what they do:

+Move: This will copy objects over from a source connected software to another target software. It basically does a Pull and then a Push, without flooding the UI memory with the model you are transferring (which would happen if you were to manually Pull the objects, and then input them into a Push – between the two actions, they would have to be stored in the UI).

Remove: This will delete all the objects that match a specific request (essentially, a query). You can read more about the requests in the Adapter Actions - advanced parameters section.

+Execute: This is used to ask the external software to execute a specific command such as Run analysis, for example. Different adapters have different compatible commands: try searching the CTRL+SHIFT+B menu for "[yourSoftwareName] Command" to see if there is any available one.

+You might have noticed that the Adapter Actions take some other particular input parameters that need to be explained: the Requests, the ActionConfig, and the Tags.

+Their understanding is not essential to grasp the overall mechanics; however you can find their explanation in the Adapter Actions - Advanced parameters section of the wiki.

+The Adapter Actions have been designed using particular criteria that are explained in the next Wiki pages.

+Most users might be satisfied with knowing that they have been developed like this so they can cover all possible use cases, while retaining ease of use.

+Try some of the Samples and you should be good to go! 🚀

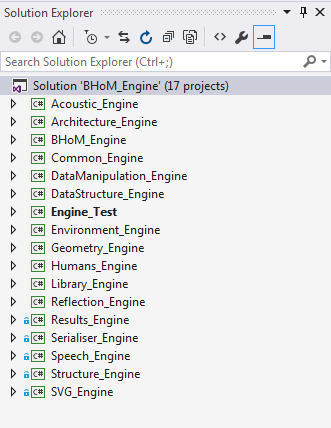

+The BHoM_Adapter is one of the base repositories, with one main Project called BHoM_Adapter.

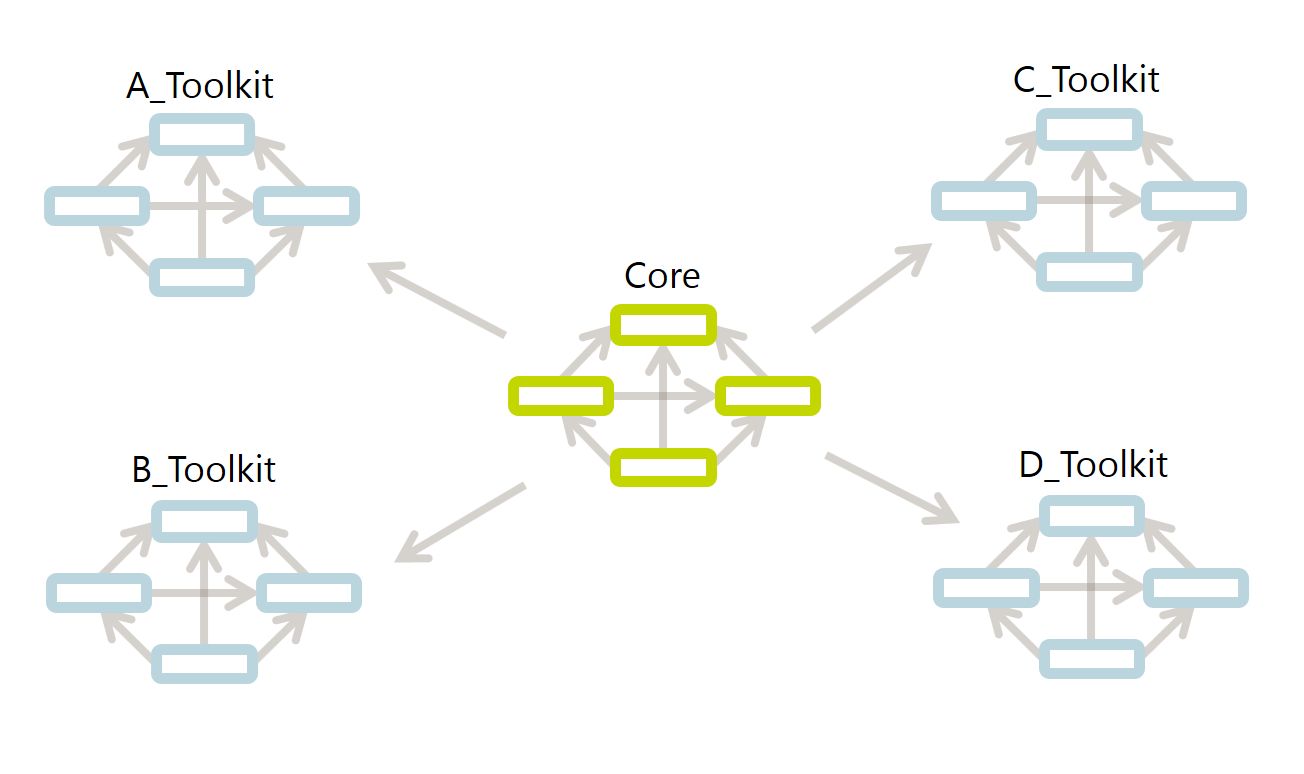

+That one is the base BHoM_Adapter. The base BHoM_Adapter includes a series of methods that are common to all software connections. Specific Adapter implementations are included in what we call the Toolkits. The base BHoM_Adapter is an abstract class that is implemented in each Toolkit's Adapter implementation. A Toolkit's Adapter extends the base BHoM_Adapter.

We will see how to create a Toolkit later; however consider that, in general, a Toolkit is simply a Visual Studio solution that can contain one or more of the following: +- A BHoM_Adapter project, that allows to implement the connection with an external software. +- A BHoM_Engine project, that should contain the Engine methods specific to your Toolkit. +- A BHoM_oM project, that should contain any oM class (types) specific to your Toolkit.

+When you want to contribute to the BHoM and create a new software connection, you will not need to implement the Adapter Actions, at least in most of the cases.

+If you need to, however, you can override them (more details on that in last page of this Wiki, where we explain how to implement an Adapter in a new BHoM Toolkit).

So what is it that you need to implement?

+The answer is: the so called CRUD Methods. We will see them in the next page.

+

+

+

+

+

+

+

+ BHoM_Engine methods are always included into a static class.

Different static classes define specific scopes for the methods they contain. There are 5 different static classes: +- Create - instantiate new objects +- Modify - modify existing objects +- Query - get properties from existing objects +- Compute - perform calculation given an existing object and/or some parameters +- Convert - transform an existing object into a different type +- External - reflects methods from external libraries

+Bar bar = Create.Bar(line);

Therefore the definition of the BHoMObject in the BHoM.dll should not contain any constructors (not even an empty default).

+With the exception of objects that implement IImmutable. See explanation of explicitly immutable BHoM Objects somewhere else. Later.

Object Initialiser syntax can be used with BHoM.dll only +e.g.

+Circle circ = new Circle { Centre = new Point { X = 10 } };

Grid grd = new Grid { Curves = new List<ICurve> { circ } };

.Rotate .Translate .MergeVertices .SetPropertyValue .Explode .SplitAt

Modify is not actually the correct term/tense now as we are immutable! But immutability is intrinsic in the strategy for the whole BHoM now so in the interest of clarity at both code and UI level Modify as a term is being used. Answers on a postcard for a better word!

+.Area .Mass .Distance .DotProduct

.Clone Could be interpreted as noun or verb, so works.

.Intersect

.IsPlanar .IsEqual .IsValid .IsClosed

In the case of explicitly immutable BHoM objects (see IImmutable), using this notation for derived properties will match notation of Readonly Properties also, which is neat.

.EquilibriumPosition

+.TextFromSpeech

+.Integrate

.Split

There will potentially be grey areas between methods being classed as Query or Compute, however in general it should be clear using the above guidelines and the distinction is important to ensure code is easily discoverable from both as an end user.

+.ToJson()

+.ToSVGString()

All convert methods must therefore be in a Convert Namespace within an _Engine project, thus separating this simple functionally from the _Adaptor project, in any software toolkits also.

+Constructors method, which returns a List<ConstructorInfo> that will be automatically reflected Methods method, which returns a List<MethodInfo> that will be automatically reflectedFor methods whose signature or return type includes one or more schemas that are not sourced from either the BH.oM or the System namespaces.

Keep GetGeometry and SetGeometery as method names - these perhaps to be still treated slightly differently through new IGeometrical interface? Discuss.

+Also allow an additional Objects Namespace where Engine code requires local class definitions for which there are good reasons to not promote to an _oM

+ + +

+

+

+

+

+

+

+ This page describes the view quality conventions that are used within the BHoM. +The description is intended to be a non-technical guide and provide universal access to understanding the methods of calculation of different view quality metrics. Links to the relevant methods are provided for those who wish to view the C# implementation.

+Jump to the section of interest: +* Measure Cvalues + * Find focal points +* Measure Avalues + * Measure Occlusion +* Measure Evalues +* Background Information

+Avalue is the percentage of the spectator's view cone filled with the playing area.

+

Occlusion is the percentage of the spectator's view occluded by the heads of spectators in front.

+ +

+

Description coming soon...

+Hudson and Westlake. Simulating human visual experience in stadiums. Proceedings of the Symposium on Simulation for Architecture & Urban Design. Society for Computer Simulation International, (2015).

+ + +

+

+

+

+

+

+

+ The BHoM Engine repository contains all the functions and algorithms that process BHoM objects.

+As we saw in the introduction to the Object Model, this structure gives us a few advantages, in particular:

+The BH.Engine repository is structured to reflect this strategy. The Visual Studio Solution contains several different Projects:

+

Each of those projects takes care of a different type of functionality. The "main" project however is the BHoM_Engine project: this contains everything that allows for basic direct processing of BHoM objects. The other projects are designed around a set of algorithms focused on a specific area such as geometry, form finding, model laundry or even a given discipline such as structure.

+++Why so many projects?

+The main reason why the BHoM Engine is split in so many projects is to allow for a large number of people to be able to work simultaneously on different parts of the code.

+

+Keep in mind that every time a file is added, deleted or even moved, this changes the project file itself. Consequentially, submitting code to GitHub can become really painful when multiple people have modified the same files.

+Splitting code per project therefore limits the need to coordinate changes to the level of each focus group.

Another benefit will be visible when we get to the "Toolkit" level: having different project makes it easier to manage Namespaces and make certain functionalities "extendable" in other parts of the code, such as in Toolkits.

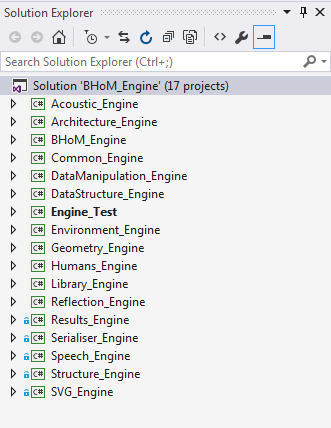

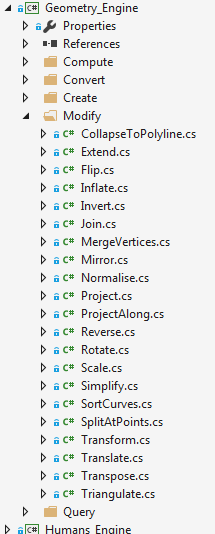

+If we look inside each Engine project, we can see that there are some folders. Those folders help categorize the code into specific actions.

+There are five possible action types that correspond to five different folder names: Compute, Convert, Create, Modify, and Query.

+Let's consider the Geometry_Engine project; we can see that it contains all of those folders:

+

Those five action names should be the same in all projects; however it's not mandatory that an Engine project should have all of them.

+Each folder contains C# files; those files must be named as the target of this action.

+In order to sort methods and organise them, 5 different categories of Engine methods exist. All methods will fall into one of these categories.

+IImmutable -- the only exception where constructors are allowed). You can define any number of methods that create the same objects via any combination of input parameters.If you are in doubt, try finding another file that does a similar thing in another project, and see where that is placed.

+For example, in the Geometry_Engine project there is a Query folder that contains, among others, a Length.cs file. This file contains methods that take care of Querying the Length for geometric objects. Consider that another equally named Length.cs file might be present in the Query folder of other Engine projects; this is the case, for example, of the Structure_Engine project, where the file contains method to compute the link of Bars (structural objects).

The file is structured in a slightly unusual way for people used to classic object-oriented programming, so let's look at an example. The following is an extract from the ClosestPoint.cs file of the Geometry_Engine project.

namespace BH.Engine.Geometry

+{

+ public static partial class Query

+ {

+ /***************************************************/

+ /**** Public Methods - Vectors ****/

+ /***************************************************/

+

+ public static Point ClosestPoint(this Point pt, Point point) {...}

+

+ /***************************************************/

+

+ public static Point ClosestPoint(this Vector vector, Point point) {...}

+

+ /***************************************************/

+

+ public static Point ClosestPoint(this Plane plane, Point point) {...}

+

+

+ /***************************************************/

+ /**** Public Methods - Curves ****/

+ /***************************************************/

+

+ public static Point ClosestPoint(this Arc arc, Point point) {...}

+

+ /***************************************************/

+

+ public static Point ClosestPoint(this Circle circle, Point point) {...}

+

+ /***************************************************/

+

+ ...

+ }

+}

+A few things should be noted:

+The Namespace always starts with BH.Engine followed by the project name (without the suffix "__Engine_", obviously).

The file should contain one and only one class, named like the containing folder. For example, any C# file contained in the "Query" folder will contain only one class called Query.

Consequently, the name of the file itself will not correspond to the name of the class, as it is usually recommended in Object Oriented Programming. The file name will generally only reflect the name of the methods defined in it.

+Note that the class is declared as a partial class. Also note that the class is declared as static.

+++Static and partial

+The last point might be a bit cryptic for those that are not fluent in C#. Here is a brief explanation that should be enough to move on the next topics.

+static means that the content of the class is available without the need to create (instantiate) an object of that class. However, that requires that all the functions contained in the class are declared static as well.

+On the other hand, partial means that the full content of that class can be spread between multiple files.

+Having the engine action classes declared as static and partial helps us simplifying the structure of the code and expose only the relevant bits to the average contributors.

+

Fluent C# users should have no problem understanding the structure of Engine classes.

+For those that want to get stuck without too many technical details, here are a few instructions on how to edit the action classes.

+. notation. For example, if you have an instance of an Arc type called myArc, you will be able to do myArc.ClosestPoint(refPoint). This way of defining functions is called Extension Methods and will be better explained below.namespace BH.Engine.Geometry

+{

+ public static partial class Modify

+ {

+ /***************************************************/

+ /**** Public Methods ****/

+ /***************************************************/

+

+ public static Mesh MergeVertices(this Mesh mesh, double tolerance = 0.001) //TODO: use the point matrix {...}

+

+

+ /***************************************************/

+ /**** Private Methods ****/

+ /***************************************************/

+

+ private static void SetFaceIndex(List<Face> faces, int from, int to) {...}

+

+

+ /***************************************************/

+ /**** Private Definitions ****/

+ /***************************************************/

+

+ private struct VertexIndex {...}

+ }

+}

+++Advanced topics

+While you might be able to write code in the BHoM Engine for a time without needing more than what has been explained so far, you should try to read the rest of the page.

+

+The concepts presented below are a bit more advanced; if you follow them, however, you will be able to provide a better experience to those using your code. Knowing what Polymorphism is and what the C#dynamictype is will also likely get you out of problematic situations, especially when you are using code from people that have not read the rest of this page.

A concept that is very useful in order to improve the use of your methods is the concept of extension methods. You can see on the example code below that we get the bounding box of a set of mesh vertices (i.e. a List of Points) by calling mesh.Vertices.Bounds(). Obviously, the List class doesn't have a Bounds method defined in it. The same goes for the BHoM objects; they even don't contain any method at all. The definition of the Bound method is actually in the BHoM Engine. In order for any BHoM objects (and even a List) to be able to call self.Bounds(), we use extension methods. Those are basically injecting functionality into an object from the outside. Let's look into how they work:

+namespace BH.Engine.Geometry

+{

+ public static partial class Query

+ {

+ ...

+

+ /***************************************************/

+ /**** public Methods - Others ****/

+ /***************************************************/

+

+ public static BoundingBox Bounds(this List<Point> pts) {...}

+

+ /***************************************************/

+

+ public static BoundingBox Bounds(this Mesh mesh)

+ {

+ return mesh.Vertices.Bounds();

+ }

+

+ /***************************************************/

+

+ ...

+

+ }

+}

+Here is the properties of the Mesh object for reference:

+namespace BH.oM.Geometry

+{

+ public class Mesh : IBHoMGeometry

+ {

+ /***************************************************/

+ /**** Properties ****/

+ /***************************************************/

+

+ public List<Point> Vertices { get; set; } = new List<Point>();

+

+ public List<Face> Faces { get; set; } = new List<Face>();

+

+

+ /***************************************************/

+ /**** Constructors ****/

+ /***************************************************/

+

+ ...

+ }

+}

+Notice how each method has a this in front of their first parameter. This is all that is needed for a static method to become an extension method. Note that we can still calculate the bounding box of a geometry by calling BH.Engine.Geometry.Query.Bounds(geom) instead of geom.Bounds() but this is far more cumbersome.

+To be complete, we should also mention that we could simply call Query.Bounds(geom) as long as using BH.Engine.Geometry is defined at the top of the file.

+While not completely necessary to be able to write methods for the BHoM Engine, Polymorphism is still a very important concept to understand. Consider the case where we have a list of objects and we want to calculate the bounding box of each of them. We want to be able to call Bounds() on each of those object without having to know what they are. More concretely, let's consider we want to calculate the bounding box of a polycurve. In order to do so, we need to first calculate the bounding box of each of its sub-curve but we don't know their type other that it is a form of curve (i.e. line, arc, nurbs curve,...). Note that ICurve is the interface common to all the curves.

+namespace BH.Engine.Geometry

+{

+ public static partial class Query

+ {

+ ...

+

+ /***************************************************/

+

+ public static BoundingBox Bounds(this PolyCurve curve)

+ {

+ List<ICurve> curves = curve.Curves;

+

+ if (curves.Count == 0)

+ return null;

+

+ BoundingBox box = Bounds(curves[0] as dynamic);

+ for (int i = 1; i < curves.Count; i++)

+ box += Bounds(curves[i] as dynamic);

+

+ return box;

+ }

+

+ /***************************************************/

+

+ ...

+

+ }

+}

+Polymorphism, as defined by Wikipedia, is the provision of a single interface to entities of different types. This means that if we had a method Bounds(ICurve curve) defined somewhere, thanks to polymorphism, we could pass it any type of curve that inherits from the interface ICurve.

+The other way around doesn't work though. If you have a series of methods implementing Bounds() for every possible ICurve, you cannot call Bounds(ICurve curve) and expect it to work since C# has no way of making sure that all the objects inheriting from ICurve will have the corresponding method. In order to ask C# to trust you on this one, you use the keyword dynamic as shown on the example above. This tells C# to figure out the real type of the ICurve during execution and call the corresponding method.

+Alright. Let's summarize what we have learnt from the last two sections:

+Using method overloading (all methods of the same name taking different input types), we don't need a different name for each argument type. So for example, calling Bounds(obj) will always work as long as there is a Bounds methods accepting the type of obj as first argument.

+Thanks to extension methods, we can choose to call a method like Bound by either calling Query.Bounds(obj) or obj.Bounds().

+Thanks to the dynamic type, we can call a method providing an interface type that has not been explicitly covered by a method definition. For example, We can call Bounds on an ICurve even if Bounds(ICurve) is not defined.

Great! We are still missing one case though: what if we want to call obj.Bounds() when obj is an ICurve? So on the example of the PolyCurve provided above, what if we wanted to replace

+ +with + +But why? We have a perfectly valid way to call Bounds on an ICurve already with the first solution. Why the need for another way? Same thing as for the extention methods: it is more compact and being able to have auto-completion after the dot is very convenient when you don't know/remember the methods available.

+So if you want to be really nice to the people using your methods, there is a solution for you:

+namespace BH.Engine.Geometry

+{

+ public static partial class Query

+ {

+ ...

+

+ /***************************************************/

+ /**** Public Methods - Interfaces ****/

+ /***************************************************/

+

+ public static BoundingBox IBounds(this IBHoMGeometry geometry)

+ {

+ return Bounds(geometry as dynamic);

+ }

+ }

+}

+If you add this code at the end of your class, this code will now work:

+ +Two comments on that: +- We used IBHoMGeometry here because every geometry implements Bounds, not just the ICurves. ICurve being a IBHoMGeometry, it will get access to IBounds(). (Read the section on polymorphism again if that is not clear to you why). In the case of a method X only supporting curves such as StartPoint for example, our interface method will simply be StartPoint(ICurve). +- The "I" in front of IBounds() is VERY IMPORTANT. If you simply call that method Bounds, it will have same name as the other methods with specific type. Say you call this method with a geometry that doesn't have a corresponding Bounds method implemented so the only one match is Bounds(IBHoMGeometry). In that case, Bounds(IBHoMGeometry) will call itself after the conversion to dynamic. You therefore end up with an infinite loop of the method calling itself.

+PS: before anyone asks, using ((dynamic)curve).Bounds(); is not an option. Not only it crashes at run-time (dynamic and extension methods are not supported together in C#), it will not provides you with the auto completion you are looking for since the real type cannot be know statically.

+But what if we do not have a method implemented for every type that that can be dynamically called by IBounds? That is what private fallback methods are for. In general fallback methods are used for handling unexpected behaviours of main method. In this case it should log an error with a proper message (see Handling Exceptional Events for more information) and return null or NaN.

+namespace BH.Engine.Geometry

+{

+ public static partial class Query

+ {

+ ...

+

+ /***************************************************/

+ /**** Private Methods - Fallback ****/

+ /***************************************************/

+

+ private static BoundingBox Bounds(IGeometry geometry)

+ {

+ Reflection.Compute.RecordError($"Bounds is not implemented for IGeometry of type: {geometry.GetType().Name}.");

+ return null;

+ }

+

+ /***************************************************/

+

+ ...

+ }

+}

+Being private and having an interface as the input prevents it from being accidentally called. It will be triggerd only if IBounds() couldn't find a proper method for the input type.

+Additional comment:

+- At this moment BHoM does not handle nullable booleans. This means it is impossible to return null from a bool method. In such cases fallback methods can throw [NotImplementedException].

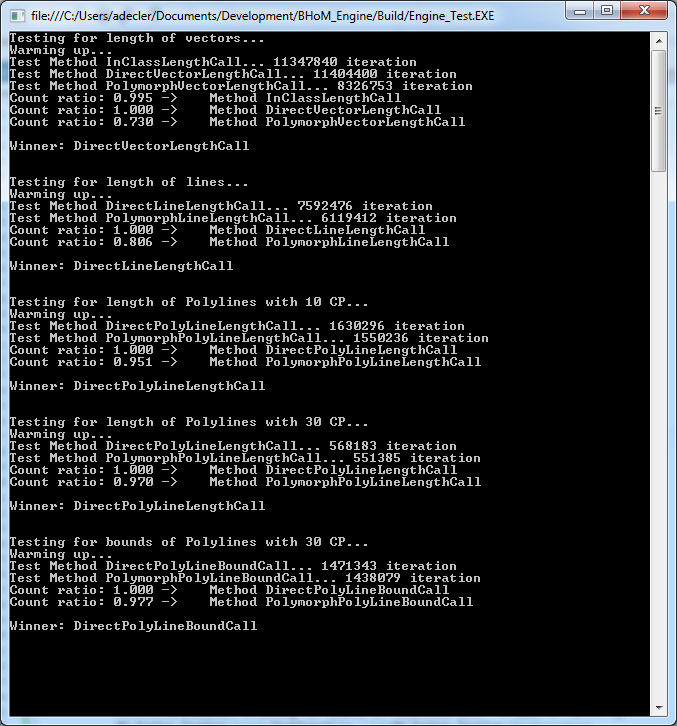

For the most experienced developers among you, some might worried about execution speed of this solution. Indeed, we are not only using extension methods but also the conversion to a dynamic object. This approach means that every method call of objects represented by an interface is actually translated into two (call to the public polymorphic methods and then to the private specific one).

+Thankfully, tests have shown that efficiency lost is minimal even for the smallest functions. Even a method that calculates the length of a vector (1 square root, 3 multiplications and 2 additions) is running at about 75% of the speed, which is perfectly acceptable. As soon as the method become bigger, the difference becomes negligible. Even a method as light as calculating the length of a short polyline doesn't show more than a few % in speed difference.

+

The concept of polymorphic extension methods explained above has one serious limitation: it works only if all methods aimed to be called by the dynamically cast object are contained within one class. That is not the case e.g. for Geometry method, which is divided into a series of Query classes spread across discipline-specific namespaces: BH.Engine.Structure, BH.Engine.Geometry etc. To enable IGeometry method, a special pattern based on RunExtensionMethod needs to be applied:

namespace BH.Engine.Spatial

+{

+ public static partial class Query

+ {

+ /******************************************/

+ /**** IElement0D ****/

+ /******************************************/

+

+ [Description("Queries the defining geometrical object which all spatial operations will act on.")]

+ [Input("element0D", "The IElement0D to get the defining geometry from.")]

+ [Output("point", "The IElement0Ds base geometrical point object.")]

+ public static Point IGeometry(this IElement0D element0D)

+ {

+ return Reflection.Compute.RunExtensionMethod(element0D, "Geometry") as Point;

+ }

+

+ /******************************************/

+ }

+}

+RunExtensionMethod method is a Reflection-based mechanism that runs the extension method relevant to type of the argument, regardless the class in which that actual method is implemented. In the case above, IGeometry method belongs to BH.Engine.Spatial.Query class, while e.g. the method for BH.oM.Geometry.Point (which implements IElement0D interface) would be in BH.Engine.Geometry.Query - thanks to calling RunExtensionMethod instead of dynamic casting it can be called successfully. The next code snippet shows the same mechanism for methods with more than one input argument (in this case being an IElement0D to be modified and a Point to overwrite the geometry of the former).

namespace BH.Engine.Spatial

+{

+ public static partial class Modify

+ {

+ /******************************************/

+ /**** IElement0D ****/

+ /******************************************/

+

+ [Description("Modifies the geometry of a IElement0D to be the provided point's. The IElement0Ds other properties are unaffected.")]

+ [Input("element0D", "The IElement0D to modify the geometry of.")]

+ [Input("point", "The new point geometry for the IElement0D.")]

+ [Output("element0D", "A IElement0D with the properties of 'element0D' and the location of 'point'.")]

+ public static IElement0D ISetGeometry(this IElement0D element0D, Point point)

+ {

+ return Reflection.Compute.RunExtensionMethod(element0D, "SetGeometry", new object[] { point }) as IElement0D;

+ }

+

+ /******************************************/

+ }

+}

+Naturally, in order to enable the use of RunExtensionMethod pattern by a given type, a correctly named extension method taking argument of such type needs to be implemented.

+

+

+

+

+

+

+

+ For an user perspective on the UIs, you might be looking for Using the BHoM.

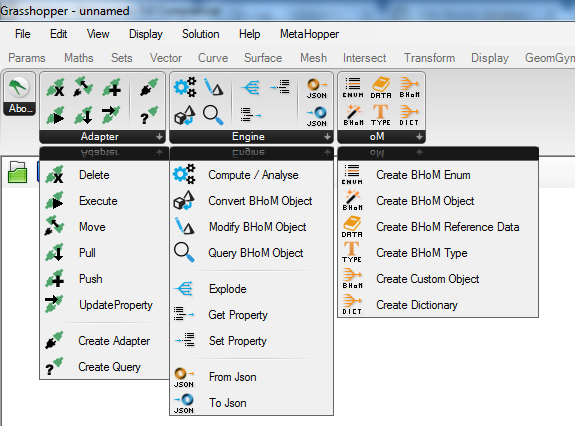

+The UI layer has been designed so that it will automatically pick everything implemented in the BHoM, the Engines and the Adapters without the need to change anything on the code of the UI.

+Here's what the menu looks like in Grasshopper. The number of component there doesn't have to change when more functionality is added to the rest of the code:

+

When dropped on the cavas, most of those components will have no input and no output. They will be converted to their final form once you have selected what they need to be in their menu:

+

You can get more information on how to use one of the BHoM UI on this page.

+BHoMObjectBHoMObjects are rich objects, which may or may not contain a geometry representation.

+If a geometry representation can be extracted, either from one of its properties, or as a result of their manipulation, it can be used to automatically render the object in the GUIs. The only action to enable that, is to create a Query.Geometry method, whose only parameter is the object you want to display, and place it in the Engine namespace that corresponds to the oM of the object. The method has to return an IGeometry or one of its assignable types.

For example, let's assume I want to automatically display a BH.oM.Structure.Elements.Bar. I'd do as follows:

+1. Go into the correspondent Engine - i.e. BH.Engine.Structure

+1. Go into the Query folder - i.e. BH.Engine.Structure.Query

+1. If it does not exist yet, create a Geometry.cs file

+1. Add an extension method name Geometry, whose only parameter is the object you want to display:

+

public static Line Geometry(this BH.oM.Structure.Elements.Bar bar)

+{

+ // Extract your geometry

+ return calculatedGeoemtry

+}

+Most of the functionality required by every UI has already been ported to the BHoM_UI repository or to the Engine (when used in more than the UIs). This makes the creation of a new UI a lot less cumbersome but this is still by no mean a small task. I would recommend to reach out to those that have already worked on UI (check the contributors of those repos) before you start writing a new UI from scratch.

+ + +

+

+

+

+

+

+

+ This page describes the Units conventions for the BHoM.

+The BHoM framework adheres as much as possible to the conventions of the SI system.

+Any Engine method must operate in SI to avoid complexity of Unit Conversions inside calculations. +Conversion to and from SI is the responsibility of the Converts inside the Adapters.

+When some units (derived or not) are not explicitly covered by this Wiki page, it is generally safe to assume that measures expressed in SI units will not be converted by the BHoM.

+The Localisation_Toolkit provides support for conversion between SI and other units systems.

+BHoM object properties can be decorated with a Quantity Attribute to define (in SI) what unity the property should be considered in.

+This is to be applied only to properties that are of a primitive numerical type, e.g. int, double, etc.

See Quantities_oM for the available attributes.

+ + +

+

+

+

+

+

+

+ The IImmutable interface makes an object unmodifiable after it was instantiated. In order to modify an IImmutable object, a new object with the desired properties needs to be instantiated, where all the properties that are required to stay the same should be copied from the old object.

+IImmutable should be implemented:

+a) if objects instantiated from a class should not be modifiable, by design, in some or all of its properties;

+b) if objects contain properties that are non-orthogonal.

Whilst reason (a) is self-explanatory, (b) is due to a specific problem that non-orthogonal properties expose.

+As a reminder, a class with ortogonal properties is a class whose properties all contain information that cannot be derived from other properties. Orthogonality is a software design principle for writing components in a way that changing one component doesn’t affect other components. For example, an orthogonal "Column" class may define a Start Point and an End Point as separate properties, but then it cannot define a third property called “Line” which goes between a start point and an end point, as it would be redundant: modifying the start or end point would require to modify the Line property too. For this reason, class with non-orthogonal properties should implement the IImmutable interface, because the consistency of its properties can be guaranteed only when the class is instantiated.

To implement the IImmutable interface, you need to make two actions:

public class YourObject : IImmutablepublic, get only, and contain a default value. i.e. public string Title { get; } = ""public, get and set, and have a default valueFor an example, you can check the BH.oM.Structure.SectionProperties.SteelSection from the Structure_oM:

+Steel Section example

+

+

+

+

+

+

+

+ The following points outlines the use of the dimensional interfaces as well as extension methods required to be implemented by them for them to function correctly in the Spatial_Engine methods.

+Please note that for classes that implement any of the following analytical interfaces, an default implementation already exists in the Analytical_Engine and for those classes an implementation is only needed if any extra action needs to be taken for that particular case. The analytical interfaces with default support are:

+| Analytical Interface | +Dimensional interface implemented | +

|---|---|

INode |

+IElement0D |

+

ILink<TNode> |

+IElement1D |

+

IEdge |

+IElement1D |

+

IOpening<TEdge> |

+IElement2D |

+

IPanel<TEdge, TOpening> |

+IElement2D |

+

Please note that the default implementations do not cover the mass interface IElementM.

If the BHoM class implements an IElement interface corresponding with its geometrical representation:

| Interface | +Implementing classes | +

|---|---|

IElement0D |

+Classes which can be represented by Point (e.g. nodes) |

+

IElement1D |

+Classes which can be represented by ICurve (e.g. bars) |

+

IElement2D |

+Classes which can be represented by a planar set of closed ICurves (e.g. planar building panels) |

+

IElementM |

+Classes which is containing matter in the form of a material and a volume | +

It needs to have the following methods implemented in it's oM-specific Engine:

+| Interface | +Required methods | +Optional methods | +When | +

|---|---|---|---|

IElement0D |

+

|

++ | |

IElement1D |

+

|

+

|

+IElement1D which endpoints are defined by IElement0D |

+

IElement2D |

+

|

+

|

+If the IElement2D has internal elements |

+

| + | + | + | + |

IElementM |

+

|

++ | + |

Spatial_Engine contains a default Transform method for all IElementXDs. This implementation only covers the transformation of the base geometry, and does not handle any additional parameters, such as local orientations of the element. For an object that contains this additional layer of information, a object specific Transform method must be implemented.

+

+

+

+

+

+

+

+

+ Geometry_oM is the core library, on which all engineering BHoM objects are based. It provides a common foundation that allows to store and represent spatial information about any type of object in any scale: building elements, their properties and others, both physical and abstract.

+All objects can be found here in the Geometry_oM

+The code is divided into a few thematic domains, each stored in a separate folder: +- Coordinate System +- Curve +- Interface +- Math +- Mesh +- Misc +- SettingOut +- ShapeProfiles +- Solid +- Surface +- Vector

+All classes belong to one namespace (BH.oM.Geometry) with one exception of Coordinate Systems, which live under BH.oM.Geometry.CoordinateSystem.

+All methods referring to the geometry belong to BH.Engine.Geometry namespace.

Two separate families of interfaces coexist in Geometry_oM. First of them organizes the classes within the namespace:

+| Interface | +Implementing classes | +

|---|---|

IGeometry |

+All classes within the namespace | +

ICurve |

+Curve classes | +

ISurface |

+Surface classes | +

The other extends the applicability of the geometry-related methods to all objects, which spatial characteristics are represented by a certain geometry type:

+| Interface | +Implementing classes | +

|---|---|

IElement0D |

+All classes represented by Point |

+

IElement1D |

+All classes represented by ICurve |

+

IElement2D |

+All classes represented by a planar set of closed ICurves (e.g. building panels) |

+

IElement3D |

+All classes represented by a closed volume (e.g. room spaces) - not implemented yet | +

There is a range of constants representing default tolerances depending on the tolerance type and scale of the model:

+| Scale | +Value | +

|---|---|

| Micro | +1e-9 | +

| Meso | +1e-6 | +

| Macro | +1e-3 | +

| Angle | +1e-6 | +

While being pulled/pushed through the Adapters, the BHoM geometry is converted to relevant geometry format used by each software package.

+BHoM Rhinoceros conversion table

+At the current stage, Geometry_oM bears a few limitations: +- Nurbs are not supported (although there is a framework for them in place) +- 3-dimensional objects (curved surfaces, volumes etc.) are not supported with a few exceptions +- Boolean operations on regions contain a few bugs

+ + +

+

+

+

+

+

+

+ This page covers Structural and Geometrical conventions for the BHoM framework.

+The following local coordinate system is adopted for 1D-elements e.g. beams, columns etc:

+Linear elements

+For non-vertical members the local z is aligned with the global z and rotated with the orientation angle around the local x.

+For vertical members the local y is aligned with the global y and rotated with the orientation angle around the local x.

+A bar is vertical if its projected length to the horizontal plane is less than 0.0001, i.e. a tolerance of 0.1mm on verticality.

+Curved planar elements

+For curved elements the local z is aligned with the normal of the plane that the curve fits in and rotated around the curve axis with the orientation angle.

+Area - Area of the section property

+Iy - Second moment of area, major axis

+Iz - Second moment of area, minor axis

+Wel,y - Elastic bending capacity, major axis

+Wel,z - Elastic bending capacity, minor axis

+Wpl,y - Plastic bending capacity, major axis

+Wpl,z - Plastic bending capacity, minor axis

+Rg,y - Radius of gyration, major axis

+Rg,z - Radius of gyration, minor axis

Vz - Distance centre to top fibre

+Vp,z - Distance centre to bottom fibre

+Vy - Distance centre to rightmost fibre

+Vp,y - Distance centre to leftmost fibre

+As,z - Shear area, major axis

+As,y - Shear area, minor axis

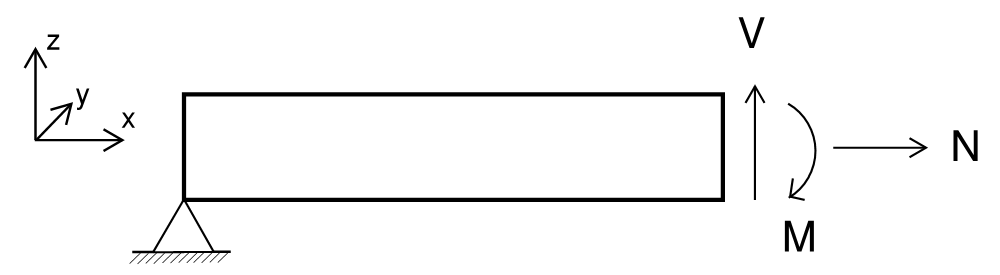

The directions for the section forces in a cut of a beam can be seen in the image below:

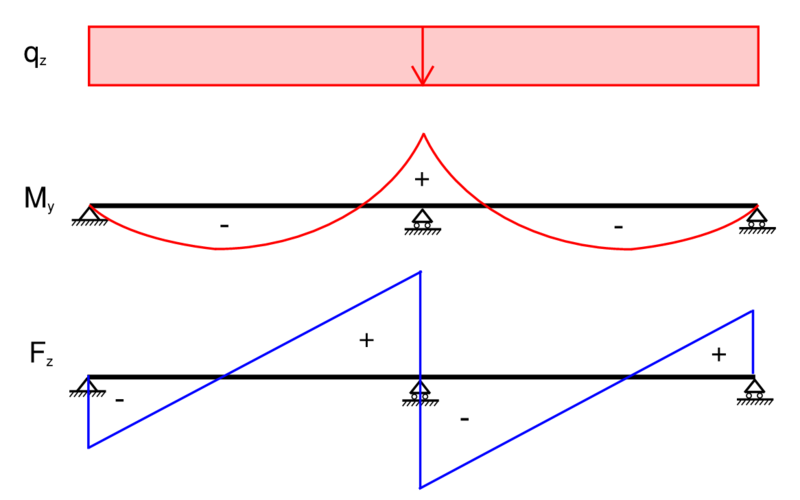

+

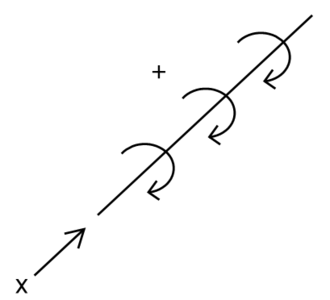

This is: +* Normal force positive along the local x-axis +* Shear forces positive along the local y and z-axes +* Bending moments positive around the local axis by using the right hand rule

+This leads to the following:

+Positive (+) = Tension

+Negative (-) = Compression

As shown in the following diagram.

+

Same sign convention as for major axis.

+The torsional moment follows the Right-hand rule convention.

+

Bar offsets specify a local vector from the bars node to where the bar is calculated from, with a rigid link between the Node object and the analysis bars end point.

+Hence: +* a BHoM bars nodes are where it attaches to other nodes, +* offsets are specified in the local coordinate system and is a translation from the node, +* local x = bar.Tangent(); +* local z = bar.Normal(); +* node + offset is where the bar node is analytically +* the space between is a rigid link

+ + +

+

+

+

+

+

+

+ It is here outlined how BHoM calculates shear area for a section

+Shear Area formula used for calculation:

+

+And A(x) is defined as all the points less than x within the region A.

Moment of inertia is know and hence the denominator will be the focus.

+

Sy for an area can be calculated for a region by its bounding curves with Greens Therom:

+

+which for line segments is:

+

And while calculating this for the entire region as line segments is easy, we want to have the regions size as a variable of x.

+So we make some assumptions about the region we are evaluating.

+* Its upper edge is always on the X-axis

+* No overhangs

i.e. its thickness at any x is defined by its lower edge,

+achieved by using WetBlanketIntegration()

+Example:

+

We will then calculate the solution for each line segment from left to right.

+This is important as Sy is dependent on everything to the left of it.

We then split the solution for Sy into three parts: +* S0, The partial solution for every previous line, i.e. sum until current +* The current line segment with variable t +* A closing line segment with variable t, connects the end of the current line segment to the X-axis

+Closing along the X-axis is not needed as the horizontal solution is always zero.

+Visual representation of the area it works on:

+

We will now want to define all variables in relation to t

+

And then plug everything into the integral

+

WetBlanketInterpetation()  +

+

+

+

+

+

+

+ This section introduces the BHoMObject, which is the foundational class for most of the Objects found in BHoM.

We also introduce the IObject, the base interface for everything in BHoM.

A typical BHoM object definition is given simply by defining a class with some public properties. That's it! No constructors or anything needed here.

+Here is an example of what a BHoM object definition looks like:

+using BH.oM.Base;

+using BH.oM.Geometry;

+

+namespace BH.oM.Acoustic

+{

+ public class Speaker : BHoMObject

+ {

+ /***************************************************/

+ /**** Properties ****/

+ /***************************************************/

+

+ public Point Position { get; set; } = new Point();

+

+ public Vector Direction { get; set; } = new Vector();

+

+ public string Category { get; set; } = "";

+

+ /***************************************************/

+ }

+}

+In general, most classes defined in BHoM are a BHoM object, except particular cases.

+Among these exceptions, you can find Geometry and Result types.